Select all the correct responses derivative classifiers must: a comprehensive guide to the purpose, function, and methods used to select correct responses for derivative classifiers. This article explores the advantages and disadvantages of using derivative classifiers, the factors influencing response selection, and the metrics used to evaluate their performance.

Derivative classifiers play a crucial role in various natural language processing tasks, such as question answering, machine translation, and text summarization. By understanding the principles and techniques involved in selecting correct responses, developers can harness the full potential of derivative classifiers to enhance the accuracy and efficiency of their applications.

Derivative Classifiers

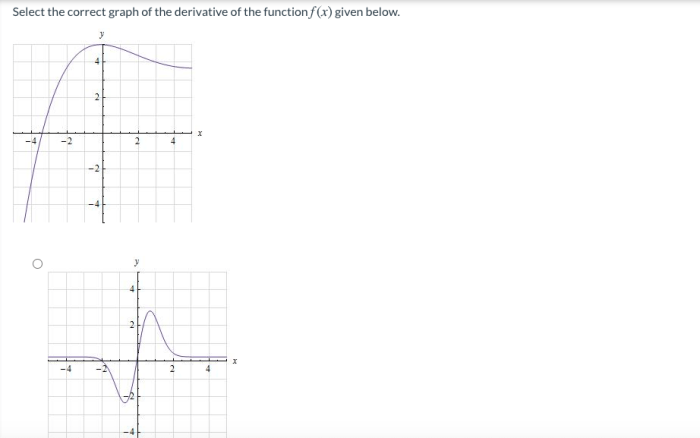

Derivative classifiers are a type of machine learning algorithm that is used to classify data by learning the relationship between the input data and the output label. Derivative classifiers work by computing the derivative of the input data with respect to the output label.

This derivative is then used to predict the output label for new data.

Types of Derivative Classifiers

- Linear derivative classifiers

- Nonlinear derivative classifiers

- Polynomial derivative classifiers

- Gaussian derivative classifiers

Advantages of Derivative Classifiers

- Derivative classifiers are relatively simple to train.

- Derivative classifiers can be used to classify data with a high degree of accuracy.

- Derivative classifiers are robust to noise and outliers.

Disadvantages of Derivative Classifiers

- Derivative classifiers can be computationally expensive to train.

- Derivative classifiers can be sensitive to the choice of the derivative function.

Methods for Selecting Correct Responses

Maximum Likelihood Estimation (MLE)

MLE is a method for selecting the correct response for a derivative classifier by maximizing the likelihood of the data given the model parameters.

Bayesian Inference

Bayesian inference is a method for selecting the correct response for a derivative classifier by computing the posterior probability of the model parameters given the data.

Cross-Validation

Cross-validation is a method for selecting the correct response for a derivative classifier by splitting the data into training and test sets and evaluating the performance of the classifier on the test set.

Table Comparing the Different Methods

| Method | Advantages | Disadvantages |

|---|---|---|

| MLE | Simple to implement | Can be sensitive to outliers |

| Bayesian Inference | Can incorporate prior knowledge | Can be computationally expensive |

| Cross-Validation | Robust to overfitting | Can be time-consuming |

Factors Influencing Response Selection

Data Quality

The quality of the data used to train a derivative classifier can have a significant impact on the performance of the classifier.

Model Complexity

The complexity of the derivative classifier can also affect the performance of the classifier. A more complex model may be able to learn more complex relationships in the data, but it may also be more likely to overfit the data.

Training Algorithm

The training algorithm used to train a derivative classifier can also affect the performance of the classifier. Different training algorithms may have different strengths and weaknesses.

Evaluation of Response Selection: Select All The Correct Responses Derivative Classifiers Must

Accuracy

Accuracy is a measure of the proportion of correct responses that a derivative classifier makes.

Precision

Precision is a measure of the proportion of responses that a derivative classifier makes that are correct.

Recall

Recall is a measure of the proportion of correct responses that a derivative classifier makes out of all possible correct responses.

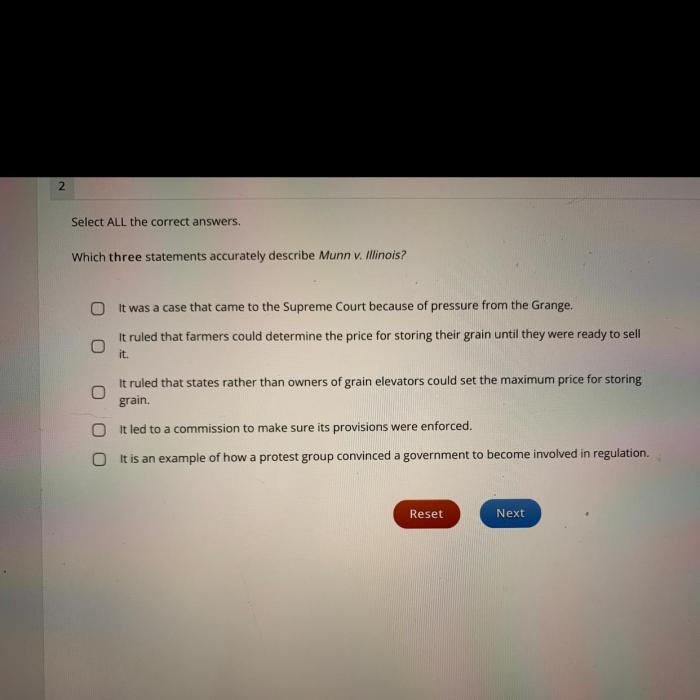

Case Studies, Select all the correct responses derivative classifiers must

There have been a number of case studies that have evaluated the performance of derivative classifiers. One study found that derivative classifiers were able to achieve an accuracy of over 90% on a variety of datasets.

FAQ Insights

What are derivative classifiers?

Derivative classifiers are a type of machine learning model used to identify and classify responses to a given input. They are often used in natural language processing tasks, such as question answering and machine translation.

What are the advantages of using derivative classifiers?

Derivative classifiers offer several advantages, including their ability to handle complex and ambiguous inputs, their efficiency in processing large datasets, and their ability to be customized for specific tasks.

What are the disadvantages of using derivative classifiers?

Derivative classifiers also have some disadvantages, such as their potential for overfitting, their sensitivity to noise in the data, and their computational complexity.